I spent about six years doing keyword research more or less the same way. Pull data from the tools, look at search volume and difficulty, find the intersection of reasonable volume and achievable competition, cluster related terms, build content to target the clusters. It worked. Rankings came. Traffic grew. The approach was systematic and predictable and delivered what it was supposed to deliver.

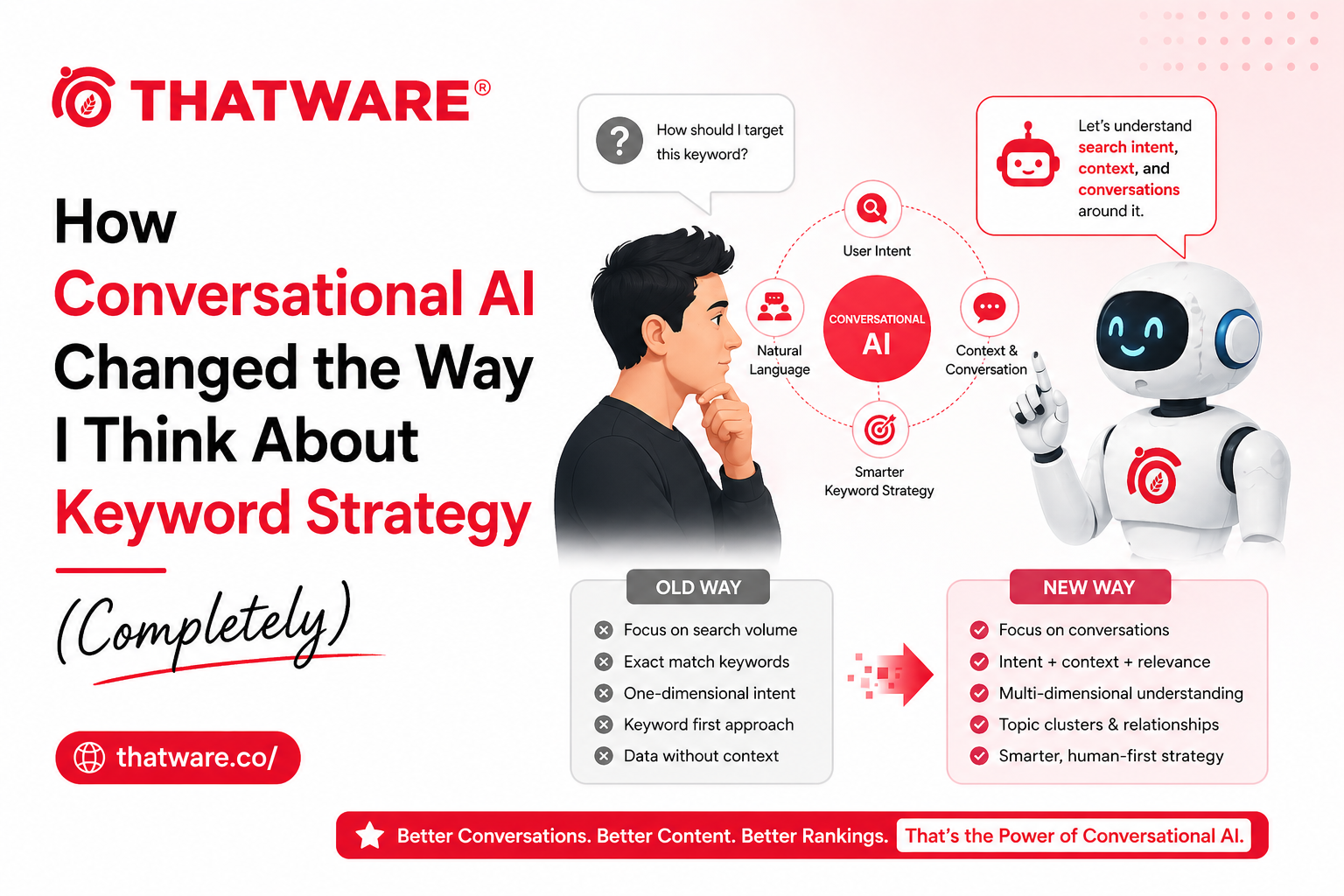

Then I started paying serious attention to how people actually interact with conversational AI – how they phrase questions to ChatGPT versus how they’d phrase the same question to Google – and the keyword framework I’d been using started to feel like it was measuring the right thing in the wrong environment. Not wrong exactly, but incomplete in ways that matter increasingly.

Here’s how that shift in thinking changed the way I approach keyword and content strategy.

The Fundamental Difference in Query Behavior

Traditional search queries are compressed. People have learned that Google responds better to keywords than to natural language, so they compress their questions into search-optimized fragments. “Best CRM small business.” “Running shoes flat feet.” “SEO agency Chicago.” These are not how people talk. They’re how people have learned to type to get search results.

Conversational AI interactions are uncompressed. When someone asks ChatGPT or Perplexity a question, they use natural language. “What’s the best CRM for a small business that doesn’t have a dedicated sales team and is mainly trying to manage customer follow-ups?” “What running shoes would you recommend for someone with flat feet who runs about 20 miles a week on pavement?” “How do I evaluate SEO agencies and know if they’re actually going to deliver results?”

These are the same informational needs as the compressed queries, expressed completely. And optimizing content for the compressed version while ignoring the full-form version increasingly misses where the user is when they’re using AI-mediated search.

What Conversational Query Optimization Changes

The full-form question version of a search query contains information that the compressed version strips out: the user’s context, their constraints, their level of sophistication, the specific outcome they’re trying to achieve. That information changes what the optimal answer looks like.

Content that answers “best CRM small business” needs to be comprehensive and somewhat general – it doesn’t know who’s asking or why. Content that answers “what’s the best CRM for a small business that doesn’t have a dedicated sales team and is mainly managing customer follow-ups?” can be specific in ways that are actually more useful – and that’s the content conversational AI is more likely to surface in response to detailed questions.

Llm conversational seo services address this by expanding keyword strategy to include the full-form question landscape, not just the compressed keyword set. This means researching how people phrase these questions in conversational contexts – forums, social platforms, customer support logs, sales call recordings – rather than only from keyword tools that capture compressed search queries.

Intent at Depth vs. Intent at Surface

Traditional keyword intent categorization is useful but shallow: informational, navigational, commercial, transactional. Someone searching “running shoes” is commercial. Someone searching “buy Nike Pegasus size 10” is transactional. The categorization is correct but doesn’t tell you much about what the content needs to do.

Conversational intent is deeper and more specific. When someone asks a conversational AI system “what running shoes would be best for someone with plantar fasciitis who also needs something that works on trails?” – that’s commercial intent, but it’s commercial intent with very specific constraints that a well-informed answer can directly address.

Content built for conversational intent acknowledges and addresses the constraints the user is operating under. It doesn’t just describe the products; it reasons about fit. It acknowledges tradeoffs. It helps the user make a decision given their specific situation, rather than presenting a generic set of options.

This is harder to produce at scale than keyword-targeted content. It requires genuine understanding of the customer’s decision context. It often requires domain expertise that can’t be faked. Which is exactly why it’s becoming more valuable – the barrier to producing it is real.

Clusters vs. Journeys: Rethinking Content Architecture

Traditional content architecture in SEO is built around topic clusters: a pillar page for a broad topic surrounded by cluster pages for subtopics, all linked together. This structure is good. It communicates topical authority to search systems.

Conversational AI optimization pushes the architecture further. Instead of organizing around topic clusters, the most effective architecture for AI search presence is organized around user journeys – the sequences of questions that someone moves through as they go from first awareness of a problem to a decision.

The journey for a SaaS buyer might go from “what is [category] software?” to “how does [category] software work?” to “what are the best [category] tools?” to “how do I evaluate [category] vendors?” to “what should I ask in a [category] demo?” Each of these is a different content piece. But organized as a journey – with clear connections between them and content that acknowledges where the user is in their decision-making at each stage – they serve AI systems that need to synthesize multi-part questions about a topic much better than isolated cluster pages do.

Measuring What’s Changed and What Hasn’t

Ai search optimization service measurement is still maturing, but a practical framework for evaluating whether your conversational keyword strategy is working combines traditional and new-layer metrics.

Traditional rankings still matter – they’re the clearest signal of topical authority. Measure them. But also measure whether your content is appearing in AI-generated answers for the full-form versions of important queries. Test this manually with regular cadence: ask ChatGPT, Perplexity, and Google’s AI Overview the conversational versions of your priority questions and document whether your brand appears.

Track whether featured snippet wins are increasing – these are the traditional search equivalent of AI answer appearances, and content that wins featured snippets consistently is often the same content that gets cited in AI answers. The overlap isn’t perfect but it’s substantial enough to be useful as a signal.

Track branded search volume trend as a proxy for top-of-funnel AI exposure. People who encounter your brand in AI-generated answers and then search for it directly show up in branded search data. Consistent growth in branded search alongside conversational optimization investment suggests the work is producing AI-mediated brand exposure.

The keyword strategy changes that conversational AI is driving aren’t a replacement for traditional keyword research. They’re an expansion – adding the full-form question landscape to the compressed keyword map, organizing content around user journeys rather than just topic clusters, and building the content depth that makes AI systems comfortable citing your brand as a reliable source. That’s a bigger job than traditional keyword targeting, but it’s the job the search landscape is increasingly requiring.